Model & Code Validation – ko44.e3op, tif885fan2.5, chogis930.5z, 382v3zethuke, ko44.e3op Model

Model and Code Validation for ko44.e3op and related variants establishes a transparent framework to audit data provenance, model assumptions, and code artifacts. It emphasizes versioned datasets, reproducible experiments, and independent reviews to counter drift and bias. The discussion centers on setting standards for data flows, computational steps, and observable outcomes, ensuring traceability across deployments. Stakeholders will find practical pipelines and benchmarks essential, yet the criteria remain preparatory, inviting further scrutiny and concrete implementation details.

What Model and Code Validation Is and Why It Matters

Model and code validation is the systematic process of assessing whether a model, along with its accompanying code, accurately represents a given problem domain and reliably produces correct, interpretable results under specified conditions.

This evaluation examines assumptions, data integrity, and computational consistency, ensuring transparency.

Through rigorous testing, it clarifies limitations, supporting informed decisions about model validation and code validation, while honoring disciplined, freedom-honoring inquiry.

Setting Up a Consistent Validation Framework for ko44.e3op Family

A consistent validation framework for the ko44.e3op family requires a structured, repeatable approach that links model assumptions, data flows, and computational steps to observable outcomes. It formalizes checks for data drift and model drift, establishes baseline metrics, and schedules independent audits. This disciplined alignment cultivates transparency, reproducibility, and freedom to adapt while preserving rigorous quality controls across deployments.

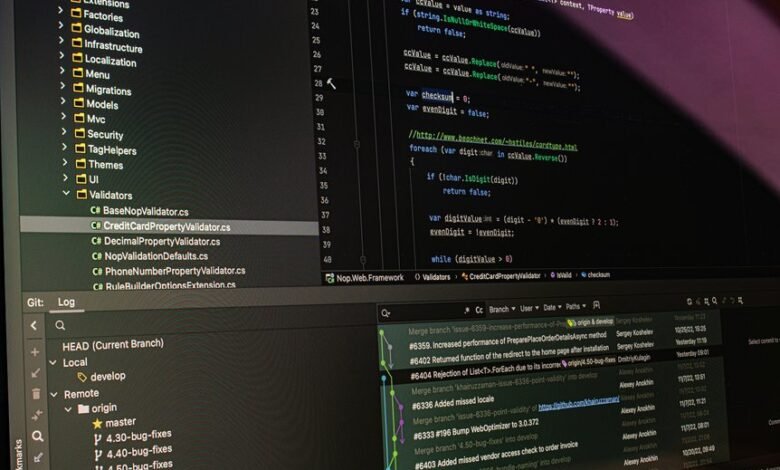

Practical Validation Pipelines: Data, Models, and Code Artifacts

To implement a robust validation regime for the ko44.e3op family, a practical suite of pipelines must be established that comprehensively handles data, models, and code artifacts. The approach emphasizes data validation and code reproducibility, with explicit checks, versioned datasets, model provenance, and standardized artifact formats. Processes promote transparency, traceability, and repeatable experiments while preserving freedom to adapt methods across environments.

Common Pitfalls and How to Benchmark for Reproducibility

Common pitfalls in reproducibility arise from fragmentation across data, models, and code, as well as from inconsistent environments and ambiguous provenance.

The analysis framework should emphasize disciplined data governance, formal code provenance, and explicit environment capture.

Benchmarking succeeds through standardized datasets, versioned artifacts, and cross-platform tests, enabling independent replication, transparent reporting, and traceable results that support disciplined freedom in scientific inquiry.

Frequently Asked Questions

How to Interpret Validation Metrics in Real-World Deployments for ko44.e3op?

Interpreting validation metrics in real-world deployments for ko44.e3op involves examining calibration, drift indicators, and segmentation performance while acknowledging interpretability pitfalls and real world drift, ensuring robust monitoring, periodic retraining, and transparent reporting for ongoing trust and safety.

Which Licenses Govern Data and Model Artifacts in This Workflow?

Licensing compliance is determined by the licenses attached to data and model artifacts; ensure provenance metadata is stored. The workflow requires documented data provenance and alignment with licenses governing data usage and model distributions.

How to Compare Different Validation Frameworks Objectively for ko44.e3op?

Comparing validation frameworks for ko44.e3op requires a structured rubric, emphasizing privacy preserving evaluation and reproducibility benchmarks, documenting assumptions, metrics, data handling, and tooling; methodically test robustness, interpretability, bias, and scalability while preserving freedom.

What Are Unseen-Edge-Case Failure Modes in the ko44.e3op Family?

Unseen-edge-case failure modes in the ko44.e3op family include atypical input distributions, data drift, and timing anomalies. The team emphasizes unrelated topic risk, robust monitoring, and strong model governance to detect and mitigate such incidents systematically.

How to Budget Compute for End-To-End Validation Pipelines?

Budgeting validation requires estimating compute, storage, and orchestration costs across data prep, test runs, and monitoring. Averages indicate 20–30% of project budget may fund deployment metrics; careful planning reduces overruns and accelerates end-to-end validation.

Conclusion

In sum, the ko44.e3op validation framework delivers a meticulous, auditable pathway from data provenance to model output. By enforcing versioned datasets, transparent data flows, and reproducible code artifacts, it enables disciplined verification across iterations. The approach eradicates drift through independent audits and standardized artifact formats, ensuring every claim is traceable and verifiable. This disciplined rigor creates a rock-solid foundation—an almost meteoric guarantee of reliability—that supports robust, trust-inspiring experimentation and deployment.